By Rama Zakaria

A new report by M.J. Bradley & Associates – based on an extensive review of data, literature, and case studies – shows that coal-fired power plants are retiring primarily due to low natural gas prices, and that the ongoing trend towards a cleaner energy resource mix is happening without compromising the reliability of our electric grid.

A new report by M.J. Bradley & Associates – based on an extensive review of data, literature, and case studies – shows that coal-fired power plants are retiring primarily due to low natural gas prices, and that the ongoing trend towards a cleaner energy resource mix is happening without compromising the reliability of our electric grid.

The report follows a highly-publicized order by Secretary of Energy Rick Perry for a review of the nation’s electricity markets and reliability. Perry wanted to determine whether clean air safeguards and policies encouraging clean energy are causing premature retirements of coal-fired power plants and threatening grid reliability.

The Department of Energy (DOE) just released that long-anticipated review — a baseload study that actually confirms that cheap natural gas has been the major driver behind coal retirements.

Now the M.J Bradley report affirms that finding, and offers even more evidence to support it and demonstrate that electric reliability remains strong.

The M.J Bradley report confirms conclusions by multiple studies which demonstrate that, of the three main factors responsible for the majority of the decline in coal generation, the increased competition from cheap natural gas has been by far the major contributor – accounting for 49 percent of the decline.

The two other factors are reduced demand for electricity – accounting for 26 percent – and increased growth in renewable energy – accounting for only 18 percent.

Several case studies featured in the M.J. Bradley report offer further proof that coal retirements are driven by economic factors – specifically low natural gas prices:

For example, PSEG President and COO Bill Levis – referring to the shutdown of Hudson Generating Station — said, “the sustained low prices of natural gas have put economic pressure on these plants for some time.” PSEG Senior Director of Operations Bill Thompson also pointed to economic reasons, not environmental regulations, as basis for the decision to retire the plant.

Florida Power & Light (FPL) cited economics and customer savings as the primary reasons for its plans to shut down three coal units. According to FPL, the retirements of Cedar Bay and Indiantown are expected to save its customers an estimated $199 million. FPL President and CEO Eric Silagy said the decision to retire the plants is part of a “forward-looking strategy of smart investments that improve the efficiency of our system, reduce our fuel consumption, prevent emissions and cut costs for our customers.” Retirement of FPL’s St. John River Power Park would add another $183 million in customer savings.

According to the M.J. Bradley report, the overall decline in U.S. coal generation is primarily due to reduced utilization of coal-fired power plants, rather than retirements of those facilities.

Most recently retired facilities were older, smaller units that were inefficient and relatively expensive to operate. On average, coal units that announced plans to retire between 2010 and 2015 were 57 years old – well past their original expected life span of 40 years.

Meanwhile, existing coal plant utilization has declined from 73 percent capacity factor in 2008 to 53 percent in 2016. At the same time, the utilization of cheaper natural gas combined-cycle plants has increased from 40 percent capacity factor to 56 percent.

As a result, M.J. Bradley estimates that less than twenty percent of the overall decline in coal generation over the past six years can be attributed to coal plant retirements, with reduced utilization of the remaining fleet accounting for the rest of the decline.

Implications of coal retirements for electric grid reliability

As coal plants retire and are replaced by newer, cleaner resources, there have been concerns about potential impacts on the reliability of our electric grid. (Those concerns were also the topic of DOE’s baseload study.)

M.J. Bradley examined the implications of coal retirements and the evolving resource mix, looking at extensive existing research including their own reliability report released earlier this year.

These studies conclude that electric reliability remains strong.

These studies also found that flexible approaches to grid management, and new technologies such as electric storage, are providing additional tools to support and ensure grid reliability.

In order to understand that conclusion, consider two factors that are used to assess reliability:

- Resource adequacy, which considers the availability of resources to meet future demand, and is assessed using metrics such as reserve margins

- Operational reliability, which considers the ability of grid operators to run the system in real-time in a secure way to balance supply and demand – and is defined in terms of Essential Reliability Services, such as frequency and voltage support and ramping capability.

As many studies have already indicated, “baseload” is an outdated term used historically to describe the way resources were being used on the grid – not to describe the above factors that are needed to maintain grid reliability.

Here is what M.J. Bradley’s report and other assessments tell us about the implications of the evolving resource mix for grid reliability:

There are no signs of deteriorating reliability on the grid today, and studies indicate continued growth in clean resources is fully compatible with continued reliability

In its 2017 State of Reliability report, the North American Electric Reliability Corporation (NERC) found that over the past five years the trends in planning reserve margins were stable while other reliability metrics were either improving, stable, or inconclusive.

NERC’s report also found that bulk power system resiliency to severe weather continues to improve.

According to a report by grid operator PJM, which has recently experienced both significant coal retirements and new deployment of clean energy resources:

[T]he expected near-term resource portfolio is among the highest-performing portfolios and is well equipped to provide the generator reliability attributes.

DOE’s own baseload study acknowledges that electric reliability remains strong. A wide range of literature further indicates that high renewable penetration futures are possible without compromising grid reliability.

Cleaner resources and new technologies being brought online help strengthen reliability

Studies show that technologies being added to the system have, in combination, most if not all the reliability attributes provided by retiring coal-fired generation and other resources exiting the system.

In fact, the evolving resource mix that includes retirement of aging capacity and addition of new gas-fired and renewable capacity can increase system reliability from a number of perspectives. For instance, available data indicates that forced and planned outage rates for renewable and natural gas technologies can be less than half of those for coal.

Studies also highlight the valuable reliability services that emerging new technologies, such as electric storage, can provide. Renewable resources and emerging technologies also help hedge against fuel supply and price volatility, contributing to resource diversity and increased resilience.

Clean energy resources have demonstrated their ability to support reliable electric service at times of severe stress on the grid.

In the 2014 polar vortex, for example, frozen coal stockpiles led to coal generation outages – so wind and demand response resources were increasingly relied upon to help maintain reliability.

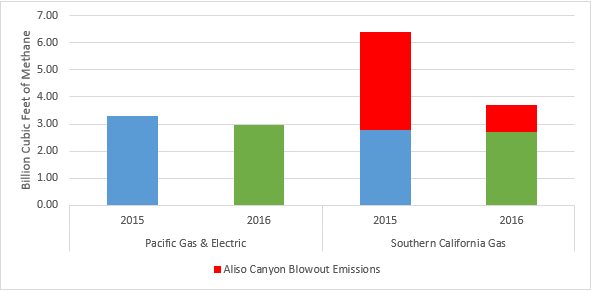

And just last year, close to 100 megawatts of electric storage was successfully deployed in less than six months to address reliability concerns stemming from the Aliso Canyon natural gas storage leak in California.

Regulators and grid operators can leverage the reliability attributes of clean resources and new technologies through improved market design

A 2016 report by DOE found that cleaner resources and emerging new technologies are creating options and opportunities, providing a new toolbox for maintaining reliability in the modern power system.

The Federal Energy Regulatory Commission (FERC) has long recognized the valuable grid services that emerging new technologies could provide – from its order on demand response to its order on frequency regulation compensation, FERC recognized the value of fast and accurate response resources in cost-effectively meeting grid reliability needs. More recently, FERC’s ancillary service reforms recognize that, with advances in technologies, variable energy resources such as wind are increasingly capable of providing reliability services such as reactive power.

Grid operators are also recognizing the valuable contributions of cleaner resources and emerging new technologies, as well as the importance of flexibility to a modern, nimble, dynamic and robust grid. For instance, both the California Independent System Operator and the Midcontinent Independent System Operator (MISO) have created ramp products, and MISO also has a dispatchable intermittent resource program.

It will be increasingly important for regulators, system planners, and grid operators to continue assessing grid reliability needs, and leveraging the capabilities of new technologies and technological advancements, in the future. It is also important to continue market design and system operation and coordination efforts to support the emerging needs of a modern 21st century electric grid.

The facts show clearly that we shouldn’t accept fearmongering that threatens our clean air safeguards. Instead, working together, America can have clean, healthy air and affordable, reliable electricity.

Read more

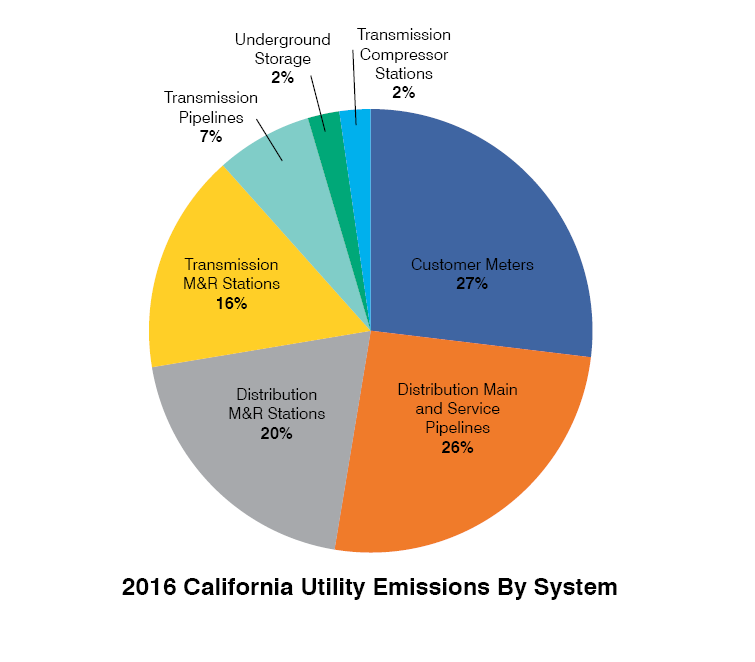

Since the 1892 discovery of oil in California, the oil and gas industry has been a major economic engine and energy supplier for the state. Although this oil and gas production may be broken down into dollars and barrels, it doesn’t tell the story of the potential impact of drilling activity on the lives of the people in Los Angeles and the Central Valley who live right next to these operations.

Since the 1892 discovery of oil in California, the oil and gas industry has been a major economic engine and energy supplier for the state. Although this oil and gas production may be broken down into dollars and barrels, it doesn’t tell the story of the potential impact of drilling activity on the lives of the people in Los Angeles and the Central Valley who live right next to these operations.